Kelly Warner Law Firm Blames USA…

In what appears as a desperate attempt to defend multiple…

By – USA HeraldAaron Kelly Law Firm Resorts To…

Attorney Aaron Kelly and his law partner Daniel Warner are…

By – Jeff WattersonArizona Bar Opens Investigation on Attorney…

USA Herald recently reported on a developing story involving Attorneys…

By – Paul O'NealJosh Duggar Appeal Denied as Convicted…

Josh Duggar remains behind bars after a federal judge denied…

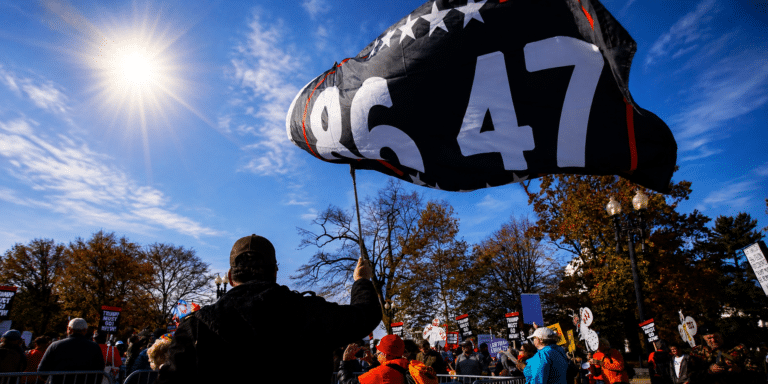

By – Jackie AllenFederal Judge Lets ’86 47′ Flag…

An Obama-appointed judge just ruled a political group can keep…

By – Samuel LopezSabrina Carpenter Granted Restraining Order Following…

Citing “severe emotional distress,” the American pop star has successfully…

By – Tyler BrooksThe Diddy Fallout: Cassie Fights Back…

As Sean “Diddy” Combs serves time behind bars, the shockwaves…

By – Tyler BrooksSouth Carolina Jury Clears Store Owner…

A South Carolina courtroom erupted with emotion Monday after a…

By – Tyler BrooksArcher Aviation: The eVTOL Takeoff Facing…

Strategic Analysis — June 2026 The electric vertical takeoff and…

By – Tyler BrooksSleeping Dog Documentary Chronicles Jeremy Corbell’s…

The new documentary Sleeping Dog arrives at a pivotal moment…

By – Jackie AllenKendall Jenner, Jacob Elordi and the…

I’ve been writing about royals and celebrities for 20 years.…

By – Nathan KayChaotic Midnight Shooting Leaves 3 Bloodied…

Downtown San Jose gunfire wounds 3, sparks wild building crash…

By – Tyler Brooks43-year-old Man Hospitalized After a Stranger…

Stranger shoots San Antonio man, 43, through door By Tyler…

By – Tyler BrooksHurricane Season Starts Today – Here’s…

Texas faces 20% hurricane risk as season begins By Tyler…

By – Tyler BrooksU.S. Military Strike In The Eastern…

U.S. Pacific boat strike kills 3, casualties cross 200 By…

By – Tyler BrooksRare Blue Micromoon Lights Up the…

Skywatchers are in for a unique celestial event as a…

By – Jackie AllenMurder-for-hire Ends with Life Sentence for…

A shocking Murder-for-hire case that spanned multiple states has concluded…

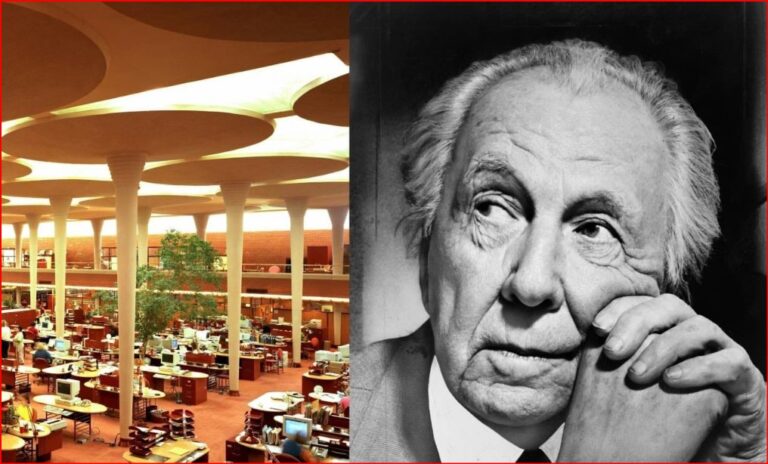

By – Jackie AllenFrank Lloyd Wright and the Taliesin…

In The Killer and Frank Lloyd Wright, veteran true-crime author…

By – Jackie AllenHollywood at a Crossroads: Spencer Pratt…

Los Angeles has its primary election this Tuesday, and the…

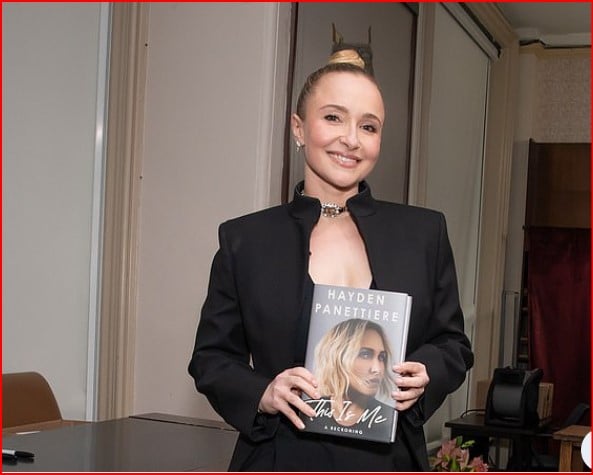

By – Jackie AllenHayden Panettiere has a Memoir About…

Hayden Panettiere is revealing the emotional toll of growing up…

By – Jackie AllenBlue Origin Rocket Explodes in Massive…

Blue Origin suffered a major setback Thursday night when one…

By – Jackie AllenBlue Origin Rocket Explodes in Massive…

Blue Origin suffered a major setback Thursday night when one…

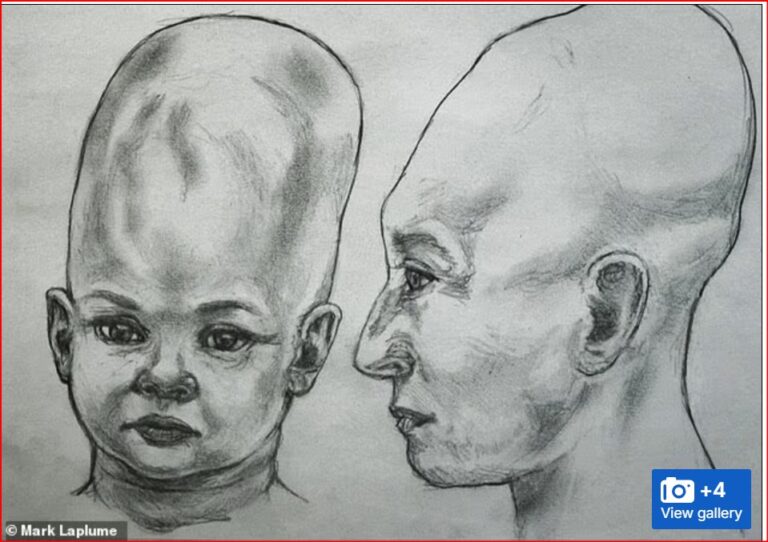

By – Jackie AllenAlien Coneheads: New DNA Study Doesn’t…

The mystery surrounding the so-called Alien Coneheads of Peru has…

By – Jackie AllenTrump’s Alien.gov Reveal Turns Into Immigration…

INSIDE THIS REPORT What millions thought would be a historic…

By – Samuel LopezTrump’s UFO files reveal mysterious flying…

The newly released UFO Files from the Trump administration have…

By – Jackie AllenWho’s Lying? E. Jean Carroll Faces…

Author and columnist E. Jean Carroll is once again at…

By – Jackie AllenSuper El Niño: Will 2026 be…

Scientists across the globe are increasingly warning that a potential…

By – Jackie AllenArcher Aviation: The eVTOL Takeoff Facing…

Strategic Analysis — June 2026 The electric vertical takeoff and…

By – Tyler BrooksFrom a Casual Night Out to…

It Doesn’t Happen Here’: Quiet Suburb Left Shattered After Fatal…

By – Tyler BrooksFrom Folklore to High Finance: The…

Wall Street and Global Powers Monetize UFO Craze By Tyler…

By – Tyler BrooksAnthropic Files Historic IPO Triggering Fierce…

Anthropic Files Historic IPO Triggering Fierce Wall Street Ethics War…

By – Tyler BrooksManhunt underway for Florida felon Adriel…

Manhunt underway for Florida felon Adriel Martinez after release breach…

By – Tyler BrooksHawaii Warns Communities of Impending Kilauea…

Hawaii Warns Communities of Impending Kilauea Ashfall By Tyler Brooks…

By – Tyler BrooksNew Pill Doubles Survival for Pancreatic…

Pancreatic cancer pill doubles life to 13 months By Tyler…

By – Tyler BrooksFDA warns public as cookie firm…

FDA warns public as cookie firm rejects urgent recall request…

By – Tyler BrooksTrump orders CDC to slash childhood…

Trump orders CDC to slash childhood vaccines from 17 to…

By – Tyler BrooksUSDA warns Americans over Salmonella in…

USDA warns Americans over Salmonella in meat products By Tylor…

By – Tyler BrooksGKN Aerospace’s Biggest Battle May Not…

By Samuel López | USA Herald The immediate danger of…

By – Samuel LopezGarden Grove Chemical Crisis Sparks Class…

By Samuel López | USA Herald A full-scale legal and…

By – Samuel LopezWembanyama in Tears: Spurs Dethrone Thunder…

Spurs dethrone Thunder in epic Game 7 road victory By…

By – Tyler BrooksSupreme Court signals 27 states could…

Supreme Court signals 27 states could ban trans female athletes…

By – Tyler BrooksMauricio Pochettino sounds alarm on Chris…

Mauricio Pochettino sounds alarm on Chris Richards injury By Tylor…

By – Tyler BrooksUSMNT star Chris Richards tears two…

USMNT star Chris Richards tears two ankle ligaments By Tylor…

By – Tyler Brooks“Money” Mayweather Tucks Tail: $100 Million…

Floyd Mayweather has beaten every opponent who ever climbed into…

By – Samuel LopezMackenzie Shirilla Sent Text Messages to…

Mackenzie Shirilla is once again at the center of public…

By – Jackie AllenNo posts found.

No posts found.