Kelly Warner Law Firm Blames USA…

5/17/17 Based on the information released in The Washington Post article…

By – USA HeraldAaron Kelly Law Firm Resorts To…

Professor Volokh thereafter filed a bar complaint against Dan Warner…

By – Jeff WattersonArizona Bar Opens Investigation on Attorney…

USA Herald recently reported on a developing story involving Attorneys…

By – Paul O'NealTrial Over Deadly Palisades Fire Reopens…

More than 80 prospective jurors were sworn in Monday and…

By – Samuel LopezFAA Issues Ground Stop at San…

SAN FRANCISCO – A sudden ground stop was issued for…

By – Tyler BrooksSynthesis: Bending Spoons Announces U.S. IPO

Why the “BSP” IPO Matters For investors and market analysts,…

By – Tyler BrooksDavid Grusch Returns To Capitol Steps…

By Samuel López | USA Herald Washington is about to…

By – Samuel LopezJeremy Corbell Issues UFO Warning: Release…

If true, the implications could be historic. But they could…

By – Samuel LopezSpirit Airlines Bankruptcy Sparks Outrage as…

By Samuel López | USA Herald As thousands of former…

By – Samuel LopezSpirit Airlines Bankruptcy Sparks Outrage as…

By Samuel López | USA Herald As thousands of former…

By – Samuel LopezDismantling Claims Before Discovery: How Operational…

By Samuel López | USA Herald Artificial intelligence has become…

By – Samuel LopezChinese Company Blocked from Claiming Olympic…

The case also reflects broader concerns about consumer protection in…

By – Samuel LopezHoward Hughes Closes $2.1 Billion Insurance…

By Samuel López | USA Herald In a deal that…

By – Samuel LopezAmerica’s Legal Hiring Boom Is Here…

By Samuel López | USA Herald A quiet hiring boom…

By – Samuel LopezSkywatchers Events June 2026

Additional resources can be found through the National Aeronautics and…

By – Jackie AllenSky Tonight App Highlights June 2026’s…

More information is available through the official Sky Tonight website…

By – Jackie AllenDomestic Violence Deaths Remain High as…

Only the children’s grandmother survived. Dodgen-Magee believes that communities, faith…

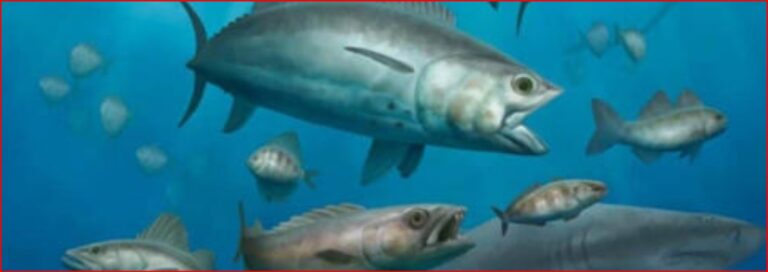

By – Jackie AllenQreiya 3 Fossil Discovery: Ancient Oceans…

Rise of the Percomorphs Seen on Qreiya 3 One of…

By – Jackie AllenElevated Safety Posture Ordered for NASA…

Learn more about international ISS partnerships at https://www.esa.int. Elevated Safety…

By – Jackie AllenTaylor Swift’s Net Worth Reaches $2…

New Music Continues to Drive Taylor Swift’s Net Worth Swift…

By – Jackie AllenTaylor Swift’s Net Worth Reaches $2…

New Music Continues to Drive Taylor Swift’s Net Worth Swift…

By – Jackie AllenMysterious Death of Former Los Alamos…

Challenges in Determining Cause of Mysterious Death The Office of…

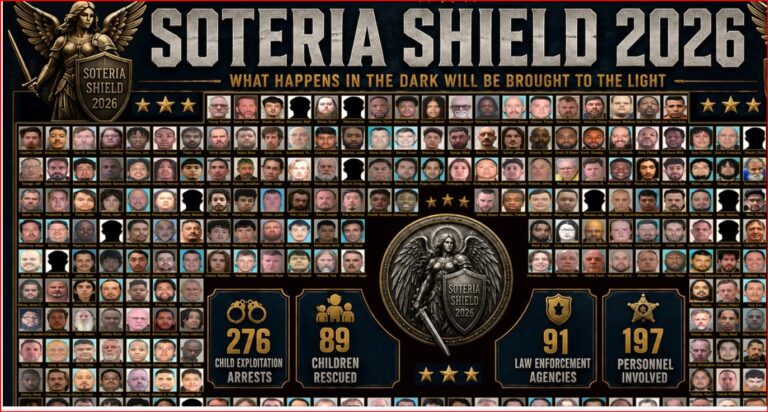

By – Jackie AllenOperation Iron Pursuit Leads to Hundreds…

Authorities say the investigation sought not only to arrest offenders…

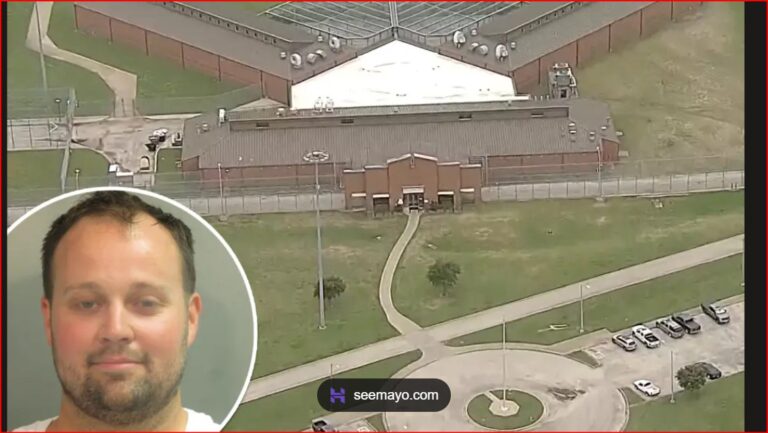

By – Jackie AllenJosh Duggar Appeal Denied as Convicted…

Josh Duggar Transfer to Federal Medical Center in Fort Worth…

By – Jackie AllenSleeping Dog Documentary Chronicles Jeremy Corbell’s…

The film reportedly features material that later appeared in recently…

By – Jackie AllenRare Blue Micromoon Lights Up the…

The Moon’s changing apparent size is simply a consequence of…

By – Jackie AllenAmerica’s Legal Hiring Boom Is Here…

By Samuel López | USA Herald A quiet hiring boom…

By – Samuel LopezLawsuit Aims to Knock Out Trump’s…

That defense, however, may become one of the most closely…

By – Samuel LopezTrump Suggests Public Ownership of AI…

By Samuel López | USA Herald What if the next…

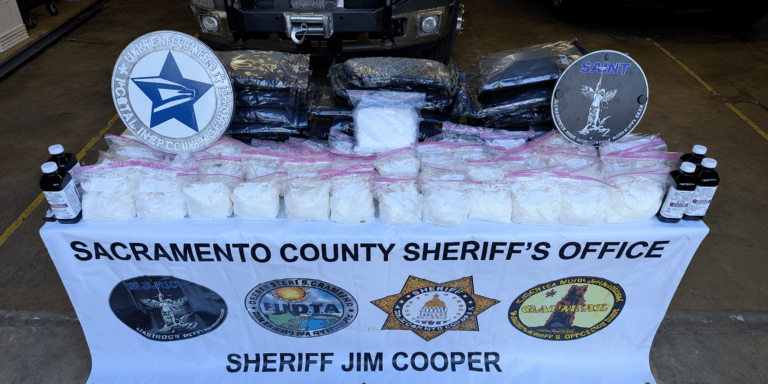

By – Samuel LopezOperation Spring Cleaning Delivers Massive Blow…

By Samuel López | USA Herald For three months, federal…

By – Samuel LopezNichelle Nichols’ Final Mission Ends in…

By Samuel López | USA Herald The woman who helped…

By – Samuel LopezIndia Police Raid Uncovers 100,000 Fake…

India’s fake degree epidemic — potentially crossing one million fraudulent…

By – Samuel LopezCannabis Giants Hit with Sweeping Class…

YOU MAY BE ENTITLED TO COMPENSATION Weitz & Luxenberg is…

By – Samuel LopezNew Pill Doubles Survival for Pancreatic…

Federal Agencies Rush Public Patient Access The dramatic clinical success…

By – Tyler BrooksFDA warns public as cookie firm…

“The FDA is issuing this alert to ensure customers are…

By – Tyler BrooksTrump orders CDC to slash childhood…

Pediatricians Defy New Rules as Legal Battle Escalates The administration’s…

By – Tyler BrooksUSDA warns Americans over Salmonella in…

Federal Inspectors Order Kitchen Cleanout Federal safety teams are urging…

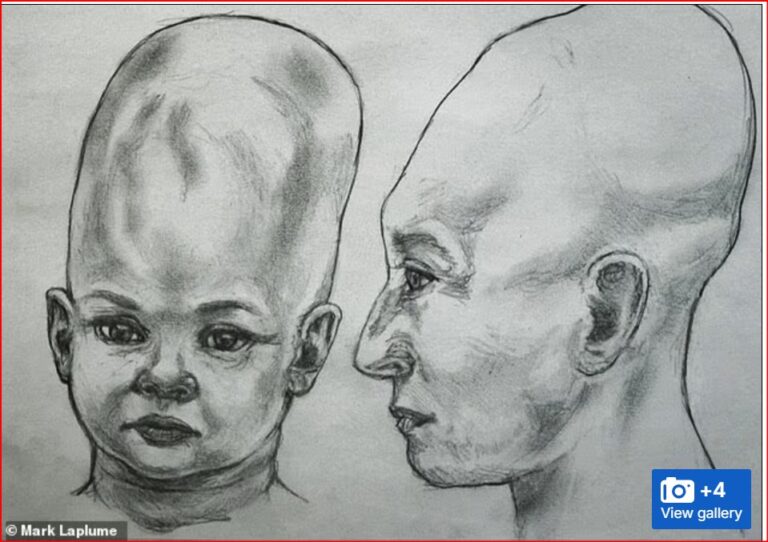

By – Tyler BrooksAlien Coneheads: New DNA Study Doesn’t…

As a result, the study could not determine the individuals’…

By – Jackie Allen2026 Stanley Cup Final Game 2:…

2026 Stanley Cup Final Game 2: A Thrilling Comeback The…

By – Tyler BrooksSupreme Court signals 27 states could…

Timeline of the Historic Legal Battles The path to the…

By – Tyler BrooksMauricio Pochettino sounds alarm on Chris…

Mauricio Pochettino sounds alarm on Chris Richards injury By Tylor…

By – Tyler BrooksUSMNT star Chris Richards tears two…

The countdown to the 2026 World Cup begins The United…

By – Tyler Brooks“Money” Mayweather Tucks Tail: $100 Million…

Mayweather’s representatives responded more carefully, stating that the boxer is…

By – Samuel LopezMackenzie Shirilla Sent Text Messages to…

Police later concluded there was no evidence indicating she had…

By – Jackie AllenNo posts found.

No posts found.