Kelly Warner Law Firm Blames USA…

5/17/17 Based on the information released in The Washington Post article…

By – USA HeraldAaron Kelly Law Firm Resorts To…

Professor Volokh thereafter filed a bar complaint against Dan Warner…

By – Jeff WattersonArizona Bar Opens Investigation on Attorney…

USA Herald recently reported on a developing story involving Attorneys…

By – Paul O'NealJosh Duggar Appeal Denied as Convicted…

Josh Duggar Transfer to Federal Medical Center in Fort Worth…

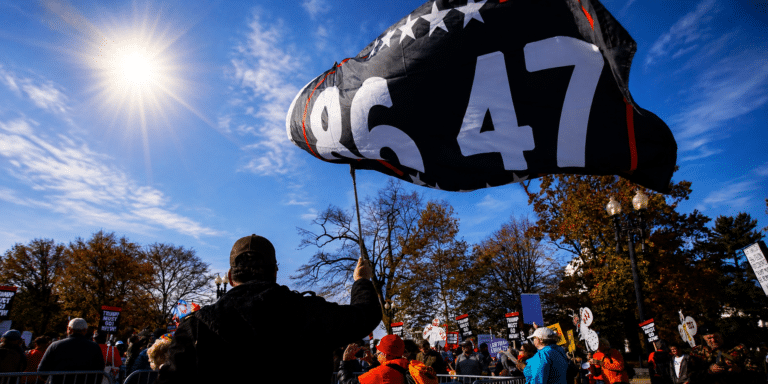

By – Jackie AllenFederal Judge Lets ’86 47′ Flag…

“Use of the statement ’86 47′ and other variations will…

By – Samuel LopezSabrina Carpenter Granted Restraining Order Following…

Citing “severe emotional distress,” the American pop star has successfully…

By – Tyler BrooksThe Diddy Fallout: Cassie Fights Back…

Two Diverging Paths These latest developments highlight an irreversible divide.…

By – Tyler BrooksSouth Carolina Jury Clears Store Owner…

A South Carolina courtroom erupted with emotion Monday after a…

By – Tyler BrooksArcher Aviation: The eVTOL Takeoff Facing…

Conclusion: A Bimodal Investment Archer Aviation is not failing operationally;…

By – Tyler BrooksSleeping Dog Documentary Chronicles Jeremy Corbell’s…

The film reportedly features material that later appeared in recently…

By – Jackie AllenKendall Jenner, Jacob Elordi and the…

Think about the kind of relationships this family was associated…

By – Nathan KayChaotic Midnight Shooting Leaves 3 Bloodied…

Gunman Remains Loose as Motive Eludes Detectives Law enforcement officials…

By – Tyler Brooks43-year-old Man Hospitalized After a Stranger…

Investigators Collect Critical Ballistic Evidence Crime scene investigators spent several…

By – Tyler BrooksHurricane Season Starts Today – Here’s…

Texas Faces Reduced But Dangerous Landfall Risk While the federal…

By – Tyler BrooksU.S. Military Strike In The Eastern…

“The premeditated and intentional killings lack any plausible legal justification.”…

By – Tyler BrooksRare Blue Micromoon Lights Up the…

The Moon’s changing apparent size is simply a consequence of…

By – Jackie AllenMurder-for-hire Ends with Life Sentence for…

Defense Argued Lack of Evidence in Murder-for-Hire Plot Defense attorneys…

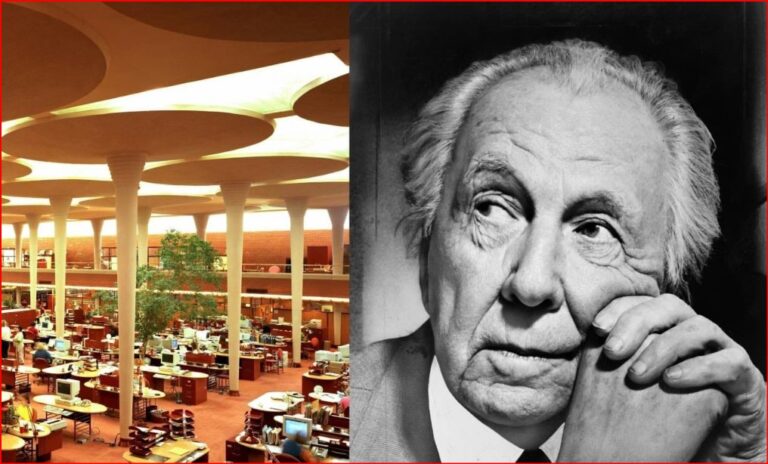

By – Jackie AllenFrank Lloyd Wright and the Taliesin…

The Taliesin Murders In August 1914, Taliesin became the scene…

By – Jackie AllenHollywood at a Crossroads: Spencer Pratt…

Spencer Pratt Presents a Competing Vision for Recovery While…

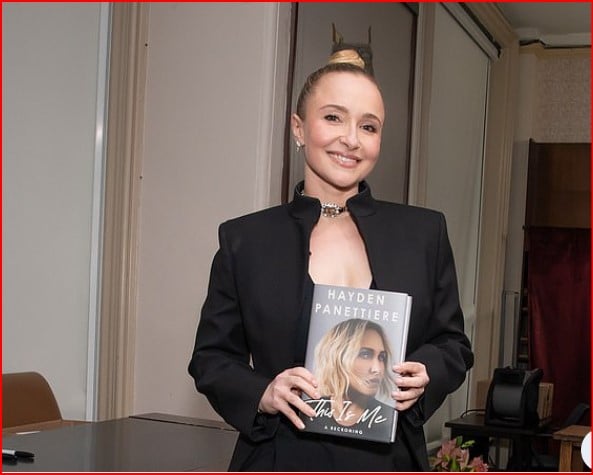

By – Jackie AllenHayden Panettiere has a Memoir About…

Rise to Fame on Heroes and Nashville Panettiere’s breakthrough came…

By – Jackie AllenBlue Origin Rocket Explodes in Massive…

Satellite Launch Mission Now in Doubt Before the explosion, the…

By – Jackie AllenBlue Origin Rocket Explodes in Massive…

Satellite Launch Mission Now in Doubt Before the explosion, the…

By – Jackie AllenAlien Coneheads: New DNA Study Doesn’t…

As a result, the study could not determine the individuals’…

By – Jackie AllenTrump’s Alien.gov Reveal Turns Into Immigration…

A Dangerous Game with Public Trust The larger issue may…

By – Samuel LopezTrump’s UFO files reveal mysterious flying…

Information about NASA’s moon missions is available through NASA Apollo…

By – Jackie AllenWho’s Lying? E. Jean Carroll Faces…

Todd Blanche Recused from E. Jean Carroll Matter One notable…

By – Jackie AllenSuper El Niño: Will 2026 be…

Professor Bill McGuire warned that temperatures in some parts of…

By – Jackie AllenArcher Aviation: The eVTOL Takeoff Facing…

Conclusion: A Bimodal Investment Archer Aviation is not failing operationally;…

By – Tyler BrooksFrom a Casual Night Out to…

It Doesn’t Happen Here’: Quiet Suburb Left Shattered After Fatal…

By – Tyler BrooksFrom Folklore to High Finance: The…

Thematic ETFs: Investment funds capturing capital through aerospace stock aggregation.…

By – Tyler BrooksAnthropic Files Historic IPO Triggering Fierce…

Anthropic Files Historic IPO Triggering Fierce Wall Street Ethics War…

By – Tyler BrooksManhunt underway for Florida felon Adriel…

Aggravated battery on a pregnant victim Multiple counts of domestic…

By – Tyler BrooksHawaii Warns Communities of Impending Kilauea…

Dangerous Pollutants Drift Across Regions Massive clouds of water vapor,…

By – Tyler BrooksNew Pill Doubles Survival for Pancreatic…

Federal Agencies Rush Public Patient Access The dramatic clinical success…

By – Tyler BrooksFDA warns public as cookie firm…

“The FDA is issuing this alert to ensure customers are…

By – Tyler BrooksTrump orders CDC to slash childhood…

Pediatricians Defy New Rules as Legal Battle Escalates The administration’s…

By – Tyler BrooksUSDA warns Americans over Salmonella in…

Federal Inspectors Order Kitchen Cleanout Federal safety teams are urging…

By – Tyler BrooksGKN Aerospace’s Biggest Battle May Not…

Commercial general liability policies often contain exclusions relating to pollutants,…

By – Samuel LopezGarden Grove Chemical Crisis Sparks Class…

By Samuel López | USA Herald A full-scale legal and…

By – Samuel LopezWembanyama in Tears: Spurs Dethrone Thunder…

Victor Wembanyama: 22 points, controlling the paint defensively Julian Champagnie:…

By – Tyler BrooksSupreme Court signals 27 states could…

Timeline of the Historic Legal Battles The path to the…

By – Tyler BrooksMauricio Pochettino sounds alarm on Chris…

Mauricio Pochettino sounds alarm on Chris Richards injury By Tylor…

By – Tyler BrooksUSMNT star Chris Richards tears two…

The countdown to the 2026 World Cup begins The United…

By – Tyler Brooks“Money” Mayweather Tucks Tail: $100 Million…

Mayweather’s representatives responded more carefully, stating that the boxer is…

By – Samuel LopezMackenzie Shirilla Sent Text Messages to…

Police later concluded there was no evidence indicating she had…

By – Jackie AllenNo posts found.

No posts found.