Kelly Warner Law Firm Blames USA Herald for Arizona Bar Investigation

In what appears as a desperate attempt to defend multiple allegations of fraud on the courts, the Kelly Warner Law…

By – USA HeraldAaron Kelly Law Firm Resorts To Attacking Former Client Again On KellyWarnerLaw.com – Pattern Recognized

Attorney Aaron Kelly and his law partner Daniel Warner are currently under investigation by the Arizona Bar for legal misconduct.…

By – Jeff WattersonArizona Bar Opens Investigation on Attorney Aaron Kelly

USA Herald recently reported on a developing story involving Attorneys Daniel Warner and Aaron Kelly. Both Warner and Kelly have…

By – Paul O'NealApple AirPods Max 2 Launch Unveils Powerful Audio Upgrades and Smart Features

The Apple AirPods Max 2 launch has officially arrived, introducing a new generation of the company’s premium over-ear headphones with…

By – Rihem AkkoucheTrump’s Pastor Paula White Draws Scrutiny As Religion Enters Iran War Debate

INSIDE THE REPORT As tensions between the United States and Iran intensify, a parallel debate has erupted online and across…

By – Samuel Lopez10-Year-Old Stabbing Luxury School Sparks Shock in Mountain View

A violent incident on a playground in a wealthy California enclave left parents and residents reeling after a 10-Year-Old stabbing…

By – Rachel MooreBaltimore Teacher Elementary School Death Shocks Community

The sudden loss of a longtime educator has left a school community grieving after the Baltimore teacher elementary school death…

By – Rachel Moore2k US Flights Cancelled as Midwest Blizzards Paralyze Major Airports

A powerful wave of winter storms has grounded travelers across the country, with 2k US flights cancelled Sunday as blizzard…

By – Rachel MooreInfant Killed in Ambulance Crash After Drunk Driver Runs Red Light in Philadelphia

A heartbreaking tragedy unfolded early Sunday morning in Philadelphia, where an infant killed in ambulance crash has left a community…

By – Rachel MooreInfant Killed in Ambulance Crash After Drunk Driver Runs Red Light in Philadelphia

A heartbreaking tragedy unfolded early Sunday morning in Philadelphia, where an infant killed in ambulance crash has left a community…

By – Rachel MooreHeat Wave Warning for Southern California Signals Historic March Temperatures

An extraordinary heat wave warning for Southern California is sending alarm bells across the region as meteorologists warn that a…

By – Rachel MooreUS Service Members in Iraq Plane Crash Identified After Deadly Military Incident

The U.S. military has revealed the identities of six crew members killed in a devastating aviation accident over Iraq, an…

By – Rachel MooreMajor March Snowstorm Paralyzes Minnesota as Travel Warnings Spread

A powerful winter system is sweeping across the Upper Midwest, burying communities in heavy snow and shutting down travel across…

By – Rihem AkkoucheHapag Lloyd to Acquire ZIM as Executives Sell Millions in Shares Ahead of Deal

A major shake-up is unfolding in the global shipping industry as Hapag Lloyd to acquire ZIM becomes the centerpiece of…

By – Rihem AkkoucheSharks in the Chicago River? Viral Sightings Turn Out to Be a Clever Movie Stunt

Residents and tourists in Chicago did a double take Saturday morning as mysterious fins sliced through the bright green water…

By – Rihem AkkoucheSharks in the Chicago River? Viral Sightings Turn Out to Be a Clever Movie Stunt

Residents and tourists in Chicago did a double take Saturday morning as mysterious fins sliced through the bright green water…

By – Rihem AkkoucheRaytheon Satellite Terminal Contract Expanded by $2 Billion in U.S. Air Force Deal

The U.S. military has dramatically expanded a key defense communications program, boosting the Raytheon satellite terminal contract by more than…

By – Rihem AkkoucheMeta 20% Workforce Cut Could Reshape Tech Giant as AI Spending Surges

A sweeping shift may be unfolding inside Meta Platforms, as reports suggest the social media giant could be preparing a…

By – Rihem AkkoucheThomas Medlin Found Dead in Brooklyn Waters After Two-Month Search

A tragic chapter in a months-long search ended this week after authorities confirmed Thomas Medlin found dead, bringing painful closure…

By – Rachel MooreAmerican Refuelling Aircraft Crashed in Iraq During Military Operation

A tense search-and-rescue effort unfolded Thursday after an American refuelling aircraft crashed in Iraq, according to U.S. military officials, in…

By – Rachel MooreAstronomers Witness Birth of a Magnetar in Explosive Cosmic Event

For the first time in recorded observation, scientists have watched the dramatic moment when a powerful stellar corpse emerged from…

By – Rachel MooreArizona Man Accused of Crucifying Pastor Pushes Judge For A Quick Death Sentence

By Samuel A. Lopez | USA Herald – An Arizona courtroom is now the center of a deeply disturbing case…

By – Samuel LopezTrump’s Laser Talk Sparks New Questions About America’s Secret Arsenal

President Donald Trump has once again done what he often does best in moments of war and tension: he dropped…

By – Samuel LopezThe Northern Lights Return

The Northern Lights have a chance to be visible from several northern U.S. states on Tuesday night, forecasters at the…

By – Jackie AllenFebruary Unemployment Up as Job Losses Surprise Economists

February Unemployment Up as the latest labor market data revealed weaker-than-expected job growth and a slight increase in the national…

By – Jackie AllenLate-Night Attack by Venezuelan National at Florida Beach

A Late-night attack by a Venezuelan National has left a Florida community shaken after authorities say a 26-year-old man ambushed…

By – Jackie AllenTrump’s War in Iran: Congress Confronts Escalation After U.S. Strikes

Trump’s War in Iran was triggered open conflict, casualties, and renewed constitutional debate in Washington. The crisis intensified following reports…

By – Jackie AllenAI Deepfake Warfare Emerging As The Next Legal Battlefield In 2026 Election Cycle

Inside This Report Artificial intelligence can now generate hyper-realistic videos and voices that are nearly impossible to distinguish from reality—raising…

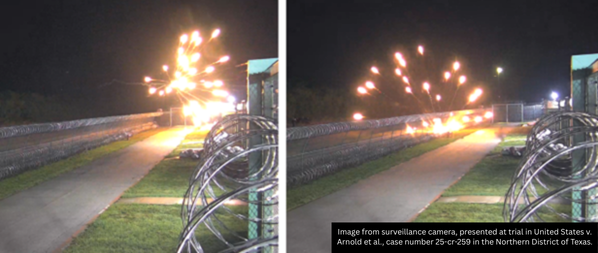

By – Samuel LopezA Violent July 4 Attack Leads To Federal Convictions For Nine Antifa Cell Members Convicted In Prairieland ICE Detention Center Shooting

Three Critical Points Readers Should Know A federal jury convicted nine alleged members of a North Texas Antifa cell for…

By – Samuel LopezTrump UFO Directive Could Shake Religion Science And Power Structures Worldwide

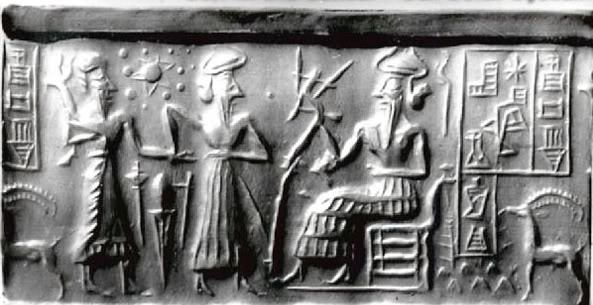

By Samuel A. Lopez | USA Herald – Something unusual is happening in Washington. President Donald Trump has ordered federal agencies to…

By – Samuel LopezAncient Astronomers Warned That Strange Skies Precede Global Upheaval—Why Their Writings Are Being Revisited Today

By Samuel A. Lopez | USA Herald – Across thousands of years and multiple civilizations, humanity has always looked upward…

By – Samuel LopezOil, War, and the Insurance Shockwave Already Hitting America

[USA HERALD] – The world’s most important shipping lane has once again become the center of a geopolitical storm—and this…

By – Samuel LopezUS Navy Prepares To Escort Tankers Through Strait of Hormuz As Oil War Risks Escalate

By Samuel A. Lopez | USA Herald – The world’s most important oil chokepoint may soon be guarded by U.S. warships.…

By – Samuel LopezWar Insurance Reality Americans Face Even When the Battlefield Is Overseas

By Samuel A. Lopez | USA Herald – When Americans hear news of airstrikes in the Persian Gulf, naval confrontations…

By – Samuel LopezSupreme Court Asked To Step In As 98-Year-Old Federal Judge Pauline Newman Fights Suspension From The Bench

[USA HERALD] – For more than four decades, one of the most influential judges in American patent law helped shape…

By – Samuel LopezIran Appears To Have Conducted Its First Major Cyberattack Against A U.S. Company, Since The War Began – New Front Opens Against American Healthcare

An alleged Iran-linked cyberattack on Stryker appears to mark a dangerous turn in the conflict, pushing digital retaliation from nuisance-level…

By – Samuel LopezBeyond Gas Prices The Strait of Hormuz Crisis Could Hit Fertilizer, Plastics, Aluminum And Global Supply Chains

By Samuel Lopez | USA Herald – Right now, most Americans hearing about the Strait of Hormuz are thinking about…

By – Samuel LopezWhen The Files Are Finally Unsealed The Most Mind-Bending Truth May Not Be What We Expect

[USA HERALD] – There is a widespread assumption that if governments release their most highly classified files related to unidentified…

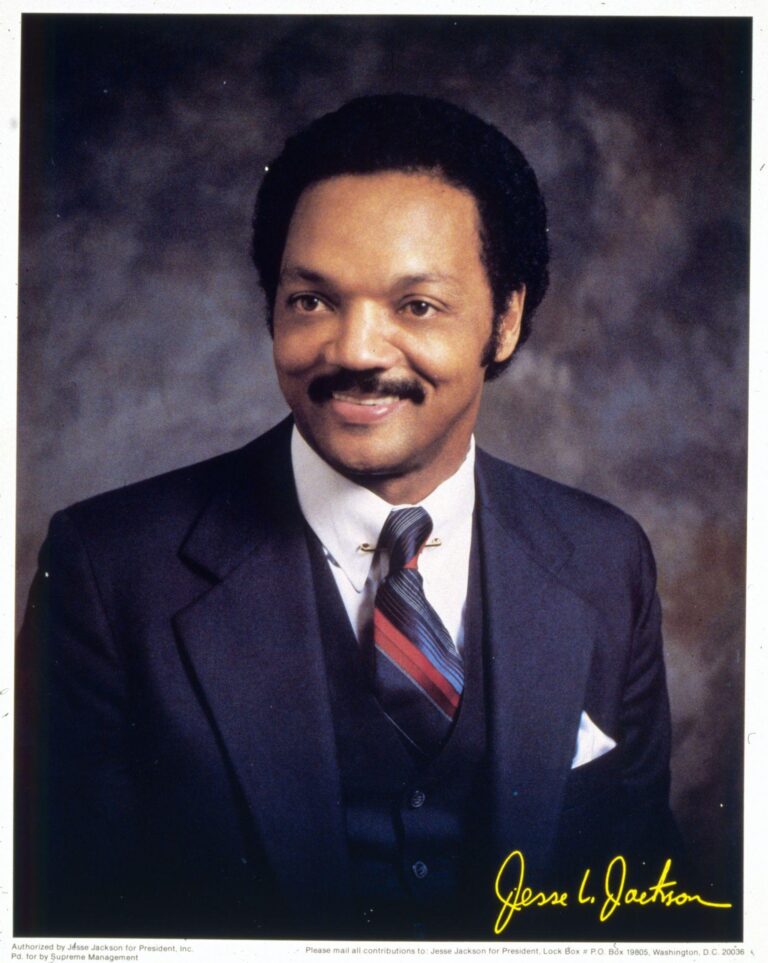

By – Samuel LopezCivil Rights Icon Rev. Jesse Jackson Dies at 84 As President Trump Issues Personal Tribute

[USA HERALD] — The Rev. Jesse Jackson, a towering figure of the American civil rights movement whose career spanned more than…

By – Samuel LopezThe World Cup Security Reckoning: Trump Warns Iran Soccer Team About Safety As War Tensions Spill Into Global Sports

By Samuel A. Lopez | USA Herald – A short Truth-Social post from President Donald Trump on Thursday morning is now…

By – Samuel LopezTrump’s War in Iran: Congress Confronts Escalation After U.S. Strikes

Trump’s War in Iran was triggered open conflict, casualties, and renewed constitutional debate in Washington. The crisis intensified following reports…

By – Jackie AllenCadillac Names Inaugural Formula 1 Car MAC-26 in Tribute to Mario Andretti Ahead of 2026 Australian Grand Prix Debut

Cadillac has officially revealed the name of its first Formula 1 challenger, confirming that its 2026 car will be called…

By – Ahmed BoughallebNorway Tops Medal Table After Day 13 at 2026 Winter Olympics as Team USA Surges Into Second Place

With 13 days complete at the 2026 Milan Cortina Winter Olympics, Norway sits atop the overall medal standings, collecting 34…

By – Ahmed BoughallebOlympic Science Explained: How Figure Skaters Spin at Blinding Speeds Without Getting Dizzy

When Amber Glenn finishes her routine, the arena usually rises with her. The music builds, her blades carve a tight…

By – Tyler BrooksOlympic Villages Run Out of Condoms at 2026 Milan-Cortina Games

Condom supplies in the Olympic Villages at the 2026 Winter Games have been temporarily depleted, the Milan-Cortina organizing committee confirmed,…

By – Tyler BrooksNo posts found.

No posts found.

No comments yet. Be the first to comment!

No comments yet. Be the first to comment!